Author

Published

11 May 2026Form Number

LP2435PDF size

7 pages, 175 KBAbstract

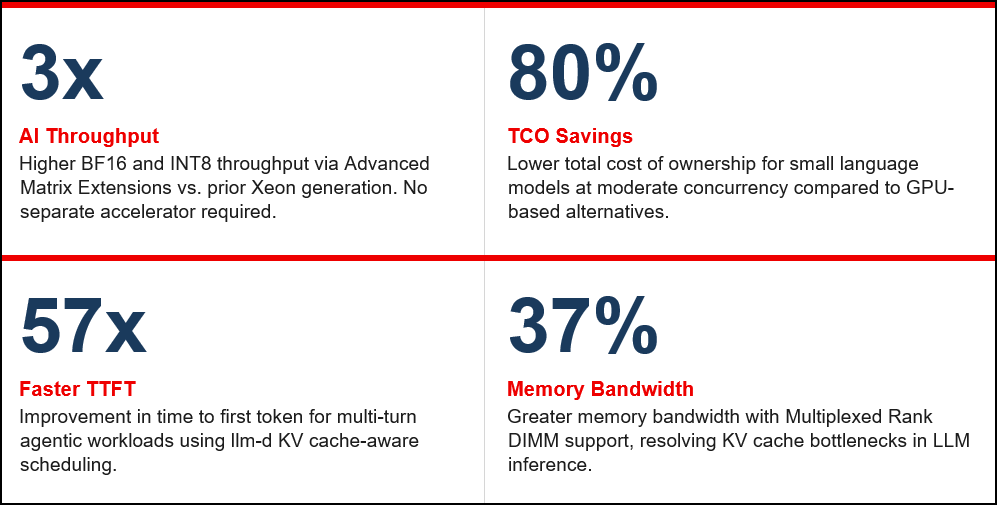

Lenovo ThinkSystem V4 servers, powered by Intel® Xeon® 6 (Granite Rapids) processors and Red Hat AI 3.4, deliver a validated enterprise AI inference platform that challenges the GPU-only paradigm. With Red Hat AI 3.4's first-class Xeon support, organizations can deploy LLM serving, Retrieval Augmented Generation, and agentic AI pipelines on CPU infrastructure — with no code changes, up to 80% lower TCO versus GPU alternatives, and unified OpenShift AI operations.

Key capabilities include Advanced Matrix Extensions for 3x higher AI throughput, MRDIMM support for 37% greater memory bandwidth, Intel TDX for confidential computing, and llm-d scheduling for up to 57x faster time-to-first-token in agentic workloads.

Introduction

The Lenovo ThinkSystem V4 servers, powered by Intel® Xeon® 6 (Granite Rapids) processors and Red Hat AI 3.4, delivers a validated and production ready enterprise AI inference platform that eliminates the GPU or nothing paradigm for the majority of real world AI workloads.

With the Q2 2026 commercial launch of Red Hat AI 3.4, the first release to treat Intel Xeon as a fully supported and first class inference target, enterprises can deploy high performance LLM serving, Retrieval Augmented Generation, and agentic AI pipelines on CPU infrastructure with zero code changes, up to 80% lower total cost of ownership compared to GPU alternatives for small language models at moderate concurrency, and the identical OpenShift AI operational experience whether running on Xeon or GPU nodes.

Built on Xeon 6 purpose built AI acceleration including Advanced Matrix Extensions for 3x higher BF16 and INT8 throughput, MRDIMM support for 37% greater memory bandwidth, and Intel TDX for confidential in memory data protection, and further enhanced by the llm-d KV cache aware scheduling framework that delivers up to 57x faster time to first token for multi turn agentic workloads, the ThinkSystem V4 servers provides a scalable and right sized foundation that organizations can deploy today and grow incrementally from CPU only inference through hybrid CPU plus GPU environments as workload demands increase.

Red Hat AI 3.4: Full Commercial Xeon Support

The Q2 2026 release of Red Hat AI 3.4 delivers the industry's first fully commercial, enterprise grade AI platform treating Intel Xeon as a first class inference target on par with GPU nodes. Customers running on Lenovo ThinkSystem V4 servers experience the identical Red Hat OpenShift AI deployment workflow on Xeon as on GPU, with no separate toolchain, no code changes, and full support for AVX2, AVX-512, and AMX instruction sets from day one.

- Unified control plane: auto-scaling, RBAC, audit logging, and model governance for Xeon and GPU nodes alike

- Quickstarts catalog: ready to run, production optimized examples for LLM serving, RAG, Agentic AI, AI Virtual Agents, Guardrail LLMs, and edge inference at docs.redhat.com/en/learn/ai-quickstarts

- Validated model catalog on Hugging Face: Llama 3.1 and 4, Granite 3.1 and 3.2, Qwen 2.5 and 3, Phi-4, Gemma 2, Mistral 7B, plus embedding models for RAG

- Phased adoption path: start with CPU only inference, progress to RAG pipelines, then scale to hybrid CPU plus GPU as concurrency demands grow

Intel Xeon 6 (Granite Rapids): Purpose Built for AI Inference

Intel Xeon 6 is the first Xeon generation explicitly architected for datacenter scale AI inference. Every capability below is built directly into the processor with no separate accelerator required and is fully exposed through the Red Hat AI 3.4 and vLLM software stack on the ThinkSystem V4 servers.

| Capability | Impact on AI Inference |

|---|---|

| Advanced Matrix Extensions (AMX) | 3x higher throughput for BF16 and INT8.[1] No separate accelerator needed. 2.8x faster TPOT for Llama 3.1 8B vs. AMD EPYC 9755.[2] |

| Priority Core Turbo (PCT) | 17% frequency boost on designated cores for sequential processing, data validation, and GPU feeding in hybrid clusters.[3] |

| MRDIMM Memory Support | 37% higher bandwidth vs. standard RDIMMs. Resolves KV cache bottlenecks that limit LLM inference throughput.[4] |

| Up to 128 cores and 500 MB LLC[1] | Massive on-die cache reduces memory latency for autoregressive token generation. Supports up to 96 concurrent users per dual-socket node. |

| Intel Trust Domain Extensions (TDX) | VM level hardware encryption isolates AI models and user data from the hypervisor. Enables confidential inference for regulated industries.[5] |

Strategic Fit and Key Use Cases

For the dominant enterprise AI tier, small language models in the 7B to 20B parameter range at moderate concurrency of 10 to 50 users, Xeon 6 on the ThinkSystem V4 servers delivers performance within SLO at up to 80% lower TCO than GPU based alternatives. Four validated use cases drive the majority of enterprise deployments:

Retrieval Augmented Generation (RAG)

Embedding generation, vector search, and reranking are CPU friendly workloads. Xeon 6 PCIe 5.0 NVMe throughput accelerates document ingestion and retrieval, delivering 2.7x higher embedding throughput vs. prior Xeon generation. Validated models include Granite-embedding-278m-multilingual and all-MiniLM-L6-v2.

Agentic AI with llm-d

Sequential agent loops are latency bound rather than throughput bound. The llm-d framework, included in Red Hat AI 3.4, uses KV cache aware scheduling to route multi-turn requests to pods where the prior conversation cache already resides, eliminating redundant prefill computation and delivering up to 57x faster time to first token on cache hit.

Confidential AI Inference

Intel TDX on the ThinkSystem V4 servers isolates AI models and user queries within hardware encrypted Trust Domains, protecting them from unauthorized access including at the hypervisor layer. For hybrid confidential GPU inference, TDX combines with the Nvidia encrypted bounce buffer approach to protect data transfers between the confidential VM and GPU. This pattern requires OpenShift Sandboxed Containers, Red Hat build of Trustee for attestation, and Kata Containers for TDX-protected VMs. Expect modest performance tradeoffs due to encryption and attestation overhead, which should be evaluated during design. Critical for healthcare, financial services, and sovereign AI deployments requiring data-in-use protection.

Hybrid CPU plus GPU Inference

The ThinkSystem V4 servers supports PCIe 5.0 GPU expansion, enabling Xeon to handle orchestration, small model serving under 20B parameters, and guardrail workloads while GPU nodes serve larger models. A semantic router via llm-d dynamically directs requests to the optimal backend, improving overall GPU utilization and reducing queue contention.

Reference Architecture and Ecosystem

The validated architecture deploys Red Hat OpenShift Container Platform and Red Hat OpenShift AI on Lenovo ThinkSystem V4 servers nodes with Intel Xeon 6, supporting three incremental deployment tiers: CPU only inference for immediate value, hybrid CPU plus GPU for scale, and Intel TDX confidential computing for security sensitive workloads.

| Component | Detail |

|---|---|

| Lenovo ThinkSystem V4 servers | 2U dual-socket, up to 128 cores per socket, up to 8TB DDR5 with MRDIMM, 32x PCIe 5.0 NVMe, optional GPU expansion, Intel TDX, XCC3 management |

| Red Hat OpenShift AI 3.4 | Q2 2026 GA. Full Xeon AMX support. Unified lifecycle management, auto-scaling, RBAC, model governance. |

| vLLM with CPU AMX backend | Upstream merged CPU support. Zero code changes for standard LLM inference workloads. |

| llm-d Inference Scheduler | KV cache aware distributed scheduling. 57x TTFT improvement for multi-turn agentic workloads. |

| Red Hat OpenShift Data Foundation | Persistent storage for RAG vector stores, model registry, and embedding databases. |

| Intel TDX plus Red Hat Trustee | Confidential computing attestation. Protects model weights and user data in memory. |

Conclusion

The convergence of Lenovo ThinkSystem V4 servers hardware, Intel Xeon 6 processor capabilities, and Red Hat AI 3.4 platform support represents a decisive step toward democratizing production AI across the enterprise. Organizations no longer need to wait for GPU availability, absorb GPU level infrastructure costs, or accept operational complexity to run meaningful AI workloads at scale. With validated performance across small language models, RAG pipelines, agentic workflows, and confidential computing use cases, this solution provides an immediate, globally available, and cost effective path to production AI that grows with the business. The phased adoption model ensures that investments made today in CPU based inference infrastructure remain relevant and expandable as workload demands evolve toward hybrid CPU and GPU environments. For enterprise teams prioritizing speed to value, total cost of ownership, data sovereignty, and operational simplicity, the Lenovo ThinkSystem V4 servers with Red Hat AI 3.4 and Intel Xeon 6 is the enterprise AI platform built for where AI is going, not just where it has been.

Why Lenovo

Lenovo is a US$70 billion revenue Fortune Global 500 company serving customers in 180 markets around the world. Focused on a bold vision to deliver smarter technology for all, we are developing world-changing technologies that power (through devices and infrastructure) and empower (through solutions, services and software) millions of customers every day.

For More Information

To learn more about this Lenovo solution contact: your Lenovo sales representative or authorized channel partner to:

https://www.lenovo.com/au/en/c/servers-storage/servers/racks/

- Lenovo Representative: Lenovo sales representative or authorized channel partner

References:

- Lenovo ThinkSystem SR650 V4:

- [1] Intel Corporation. "Intel® Xeon® 6 Product Brief." Intel, 2024.

- [2] Intel Corporation. "Accelerating vLLM Inference: Intel Xeon 6 Processor Advantage over AMD EPYC." Intel Community Blog, 2024.

- [3] Intel Newsroom. "New Intel Xeon 6 CPUs to Maximize GPU-Accelerated AI Performance." Intel Corporation, 2025.

- [4] Intel Newsroom. "New Ultrafast Memory Boosts Intel Data Center Chips." Intel Corporation, 2024.

- [5] Intel Corporation. "Intel® Trust Domain Extensions (Intel® TDX) Overview." Intel, 2024.

- [6] Red Hat and Intel. "AI on Intel® Xeon® Processors with Red Hat OpenShift AI — Reference Architecture for Scalable Inference and Agentic AI on Intel® Xeon® 6." Red Hat / Intel Reference Guide, May 2026.

Authors

Chris Honore is a Solutions Product Manager at Lenovo with deep expertise in datacenter products and solution offerings. He has a strong background in consulting and solution development, helping customers design and support on-premises and hybrid environments. Chris has spent the past 15 years with IBM and Lenovo, specializing in x86 server and datacenter solutions. Prior to that, he built two decades of experience in the telecommunications industry, serving in both technical and business leadership roles.

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

ThinkSystem®

The following terms are trademarks of other companies:

AMD and AMD EPYC™ are trademarks of Advanced Micro Devices, Inc.

Intel®, the Intel logo and Xeon® are trademarks of Intel Corporation or its subsidiaries.

Other company, product, or service names may be trademarks or service marks of others.

Configure and Buy

Full Change History

Course Detail

Employees Only Content

The content in this document with a is only visible to employees who are logged in. Logon using your Lenovo ITcode and password via Lenovo single-signon (SSO).

The author of the document has determined that this content is classified as Lenovo Internal and should not be normally be made available to people who are not employees or contractors. This includes partners, customers, and competitors. The reasons may vary and you should reach out to the authors of the document for clarification, if needed. Be cautious about sharing this content with others as it may contain sensitive information.

Any visitor to the Lenovo Press web site who is not logged on will not be able to see this employee-only content. This content is excluded from search engine indexes and will not appear in any search results.

For all users, including logged-in employees, this employee-only content does not appear in the PDF version of this document.

This functionality is cookie based. The web site will normally remember your login state between browser sessions, however, if you clear cookies at the end of a session or work in an Incognito/Private browser window, then you will need to log in each time.

If you have any questions about this feature of the Lenovo Press web, please email David Watts at dwatts@lenovo.com.